Data Quality Starts with You - Accountability via Survey Design

Now that we know how to write great questions and avoid common pitfalls with particular question types, we are ready to fuse together our questionnaire that will elicit high quality data and contribute to a positive participant experience.

In the spirit of agile research, we regularly reflect on how we can improve our research abilities, and we strive to create a more seamless experience for survey participants. Our goals are:

- To improve response rates on our tests.

- To encourage respondents to give us the best, most accurate data.

- To enable our customers to learn faster and innovate smarter from high-quality data.

- To reverse the damage being done to respondents.

To achieve these goals, here is what we’ve learned regarding questionnaire design:

1. Start with a single clear, concise objective.

Before investing time and money in research, it’s important to know your research goal. And be specific. Specificity helps to prioritize questions and distinguish between “nice to have” and “must have” data points. At Feedback Loop, we strive to keep survey length at five minutes or less, so the need to be precise with the goal of the research is even more important in agile.

When customers come to us with multiple objectives for research, we recommend separating into individual tests. We find that we get better quality data and more actionable insights when respondents focus on a singular topic, rather than crowding numerous objectives and tasks into a single questionnaire. Effectively, we have enabled our customers to do more with less.

2. Treat your screener as funnel

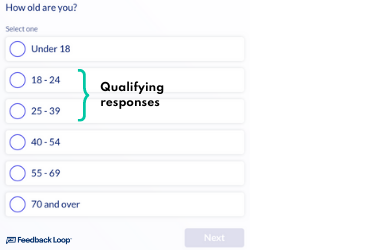

Before asking anything related to your research objective, participants must be evaluated to determine if they meet the criteria required to partake in the research. Feedback Loop’s guiding principle for screening is to treat your screener as a funnel - start as general as possible and get more specific as the screener progresses. As with everything else, keep your screener short.

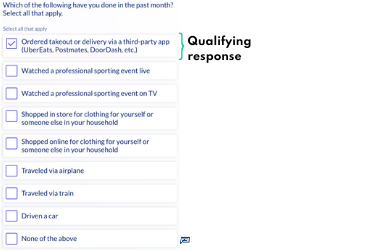

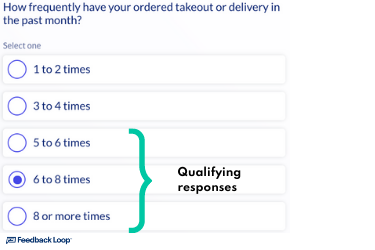

Let’s say you are looking for people ages 18-39 who order food 5 or more times a month from a food delivery app like UberEats, DoorDash, Postmates, etc.

Using Feedback Loop’s standard would look something like this:

Followed by:

Then:

Asking questions in a sequential order ensures that you are getting realistic, logical responses.

3. Be pragmatic in data collection

Agile research is intended to be terse and directional. With a limited number of questions, every data point matters.

It is important to take an economical approach to data collection and avoid collecting unnecessary data. As a general rule of thumb, if you aren’t going to use the data, don’t collect it.

To illustrate, assume you are conducting research for a quick service restaurant and you are gathering aided awareness:

Which of the following quick service restaurants have you heard of?

McDonald’s

Burger King

Wendy’s

Chick-fil-a

Popeyes

Chipotle

Other (specify)

If you don’t intend to look at or use the data collected in “Other”, there is no need to collect it.

This is especially true in screening criteria as many researchers like to screen respondents in full before terminating them. If you are going to use the data, pay the respondent. If you’re not going to use the data, let them move on to other paid opportunities to preserve their affinity for participating in research.

4. Avoid Repetitive Questions

Feedback Loop uses some of the best sourcing partners in the industry to reach our audiences. We rely heavily on our sourcing partners to profile their respondents in-depth and pass the profiling data to us along with the respondent. This approach enables us to spend our limited time with respondents learning their opinions and preferences, while maintaining the ability to slice responses by demographic profiling from the sourcing partner -- Win, Win!

There are instances where we need to screen further on our end than our sourcing partners have profiled on their respondents, but we avoid asking questions that we already have the answer to at all costs.

Think of respondents as people - how do you feel when someone asks you the same question multiple times in a conversation? Annoyed? Frustrated? Bored? Uninterested? Are you likely to continue the conversation or provide any meaningful input moving forward? Likely not!

5. Keep Surveys Short

In the digital age, research is competing for participant’s time and attention. To keep our participation rates high and encourage authentic feedback, our surveys have a maximum of 10 questions. Based on the tens of thousands of tests run on the Feedback Loop platform, we have discovered that exceeding 10 questions leads to diminished participation rates and poor data quality.

In order to serve our customer’s best interests, we cap surveys at 10 questions to mitigate drop-off. When we exceed this limit we see fewer respondents completing the survey resulting in lower sample sizes to drive our customer’s decisions. What’s worse, respondents who do complete the tests provide less useful feedback as the experience progresses, namely providing inarticulate or even unreliable responses.

This can also have detrimental long-term effects on the research industry if left unchecked. The more research fatigues respondents, the more unreliable data becomes. And further, the more we fatigue respondents, the more research participation becomes boring and arduous, and thus the lifeline of our industry wanes.

6. Limit Open Ends

Of these 10 questions, we recommend asking just two (2) open ends. Asking too many open ended questions imposes a higher cognitive load on participants. We’ve seen respondents lose interest and quit surveys when asked too many open ended questions. Yes, open ends deliver rich information and are a value-add to directional research which is why 20% of DISQO Experience Suite surveys provide open ended feedback. It’s all about balance!

7. Use an Introduction and Instructions

The introduction is the first thing respondents see when they come into your survey. This is your chance to captivate them and set expectations on what to expect during the survey experience. The most common feedback we hear from respondents is that introductions are misleading and misrepresented - specifically around what activities or tasks to expect in the survey and how long the survey will take to complete.

Additionally, instructions throughout the survey can help to re-engage and reclaim respondents’ attention. At DISQO, we use instructions and formatting (bold/italics) to indicate differences between questions or prototypes that may look the same. For example, say you are testing three concepts that are similar. We find that indicating to respondents “this is Concept 1 of 3” helps them to reset and adequately evaluate the differences between concepts.

8. The Golden Rule Applies

Use your manners. Survey participants are people, too. They don’t have to take your survey, or any surveys for that matter. What makes you comfortable speaking to a stranger or offering your opinions? “Please” and “Thank you” go a long way in the real world, and the same is true for surveys.

At DISQO, we believe that we have a responsibility to treat respondents better and create more compelling survey experiences. There are heaps of research on research experiments and articles that regard respondent experience as paramount to the longevity of the research industry - after all, if we don’t have respondents to take our surveys, we won’t have data from which to derive insights.

9. Ask for feedback

When all else fails, ask respondents for feedback on your surveys. Just as researchers, innovators, and product managers want feedback on the products, services, and experiences we create, we must regard surveys as an extension of ourselves, our brand, and our work. At DISQO, we assume positive intentions and pursue growth and learning - it’s not that respondents don’t care about providing feedback or deliberately want to give us poor quality data. We regularly ask survey participants about their preferences and build our experiences around that feedback to ensure we are promoting a legacy of a great participant experience.

We are hopeful that you’ve learned something from our standards around question design and survey design. At DISQO, we believe that these are small steps that we can take as researchers to enable a thriving research industry for years to come. After all, survey participants are priceless.

Subscribe now!

Get our new reports, case studies, podcasts, articles and events