Data Quality Starts With You - How to Write Great Survey Questions

Data quality is the most important component of research. If you aren’t achieving high-quality, credible results, why invest time and money in conducting research at all?

This is unquestionably true of the customer experience research generated by DISQO Experience Suite, as our platform executes rapidly - going from objective to report in 72 hours. In today's world, there simply isn’t time to replace poor quality data. Anything unusable is tossed out resulting in a smaller sample sizes for quick and iterative decision-making.

Data quality is a contentious topic. Every player in the research space has a different opinion of who is ultimately responsible. At DISQO, we value accountability. We feel that ensuring good data quality starts with thoughtful questionnaire design.

Before looking at a finished survey as a whole, let’s review DISQO’s standards and best practices for designing individual questions.

1. Avoid Leading Questions

At DISQO, we take pride in our research expertise and craft well thought-out, effective tests each time. We are well-versed on the ins and outs of participant behavior. In research, you can lead a horse to water, and it actually will drink. In other words, any stereotype or opinion you offer to people in your survey questions will likely be validated.

Avoid using opinions or assumptions when crafting questions and response options. Make it possible for people to disagree with your opinions and hypotheses.

For example:

- Is this app experience easier to use than the previous?

vs.

- Which app experience was easier to use?

People are most likely to answer yes in the first question. The second question reduces the likelihood that the response will be biased by asking people for their opinion between the two concepts without encouraging a particular response.

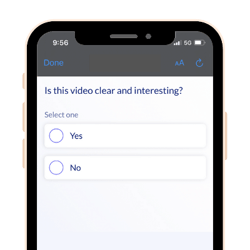

As a general rule of thumb, avoid yes or no questions as people most likely reply “yes” when faced with a yes/no question.

2. Avoid Double-Barreled Questions

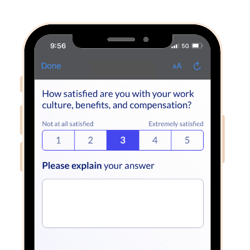

A double-barreled question is when two questions are merged into a single question and answered with a single response, leading to inscrutable results.

Here are examples of double-barreled questions:

Double-barreled questions are detrimental to high-quality research results. They may seem like a good way to include more information in a short survey, but how good is a survey response that cannot be accurately tied to the question it answers? To check for double-barreled questions, search for the words “and” or “or” in your survey document. While this is not failsafe, it’s a great first step.

3. Avoid Absolutes

Similar to appreciating neutrality, researchers should avoid using absolute language in their research. Absolutes are terms like “Always”, “Never”, “Every”, “All”. These terms are extreme and often exaggerate opinions. Just as you would avoid a yes or no question, allow people to express nuanced opinions, lifestyles, and behaviors.

This is an extreme example that doesn’t elicit the truth from people as they may drink coffee most days or some days, but not every day or never. Rarely is something black and white or all or nothing.

4. Offer Balanced Answer Options

We talked about leading questions in our first point, but it’s worth mentioning again in the context of scale questions. Scales and answer options should always be balanced to avoid projecting opinions onto people.

Let’s take a look at an example:

How much do you agree with the following statement?

Renters insurance makes me feel secure.

Agree Strongly

Agree Somewhat

Agree Slightly

Neutral

Disagree

Here you are effectively reducing to a three-point scale with varying degrees of “Agree”. This approach often provides data that is falsely positive since you are offering more opportunities for people to agree with the statement.

A better approach would be to reframe the scale to be balanced:

How much do you agree with the following statement?

Renters insurance makes me feel secure.

Agree Strongly

Agree Somewhat

Neither Agree nor Disagree

Disagree Somewhat

Disagree Strongly

5. Accept Neutrality

Too often in research, we require responses when the person doesn’t actually have an opinion on the topic. In our previous example, what would happen if the “neutral” answer option was removed?

How much do you agree or disagree with the following statement?

Renters insurance makes me feel secure.

Agree Strongly

Agree Somewhat

Disagree Somewhat

Disagree Strongly

This is essentially forcing a person to either agree or disagree with the statement. Isn’t neutrality valuable? What if the team evaluating the data output of the survey plans to incorporate security into their messaging? If neutrality isn’t allowed, this hypothesis may never be refuted.

6. Provide an Opt Out

It is important to remember that not every situation applies to every individual. It is possible, if not probable, that people do not have an opinion or do not use a well-known brand. After all, there are SO many options in everyday life!

Take for example:

What soft drink do you drink most often? Choose one.

Coke

Diet Coke

Sprite

Mountain Dew

Dr. Pepper

Diet Dr. Pepper

Pepsi

Other (Specify)

None of the above

In this example, “Other” and “None of the above” capture the same information - the person does not drink any of the soft drink brands listed. Instead, offer “Do not drink soft drinks” in the place of “None of the above”, and keep the “Other” option to capture participants who do drink soft drinks, but do not drink the ones listed.

Additionally, it is important to avoid combining opt-out responses.

For example:

What soft drink do you drink most often? Choose one.

Coke

Diet Coke

Sprite

Mountain Dew

Dr. Pepper

Diet Dr. Pepper

Pepsi

None of the above / Do not drink soft drinks

If a large number of people selected the final option, it would be impossible to understand if these people don’t drink soft drinks or if they drink another brand of soft drink not listed. This distinction is key.

7. Avoid Obscure or Mistakable Language

The ultimate goal of research is to make decisions based on data collected and insights derived. As researchers and product managers, we are sometimes so close to our product or service that we are blinded by what is and is not common knowledge. Around the office, we use technical jargon and abbreviations that are well-known in our world, but may not be understood by the general population. When designing and executing research, humanize your survey and use language that you would use in a typical conversation outside of work.

To illustrate, assume you work for an insurance provider. When asking people about their current coverage, you ask:

Which of the following does your current homeowners insurance policy cover? Select all that apply.

Dwelling

Structure

Personal Liability

Loss of Use

Personal Property

Assume the audience you are speaking to is the decision maker for homeowners insurance. Despite this, they may not know off hand what all is included in the different coverage options listed. When in doubt, provide examples (and be sure to keep them brief to avoid respondent fatigue).

Additionally, avoid using words that have multiple meanings or slang. For example, take the word “key”. Key has a number of meanings:

- Musical pitch(es)

- Device used to start a car

- Computer/phone button used to type or dial

- Important or necessary component

Think about your intended audience and write for the people who will answer the questions using colloquial language.

8. Avoid Excessive Lists or Options

While ensuring that people can give honest opinions, it’s important not to overwhelm them with choices. People make choices every minute of every day - both consciously and unconsciously. While it is a good idea to have enough answer options to avoid biasing responses, having too many options increases the cognitive load being put on participants. This can lead to drop out or unreliable data.

To ensure you are covering all relevant answer options, using an “Other” option followed by an open-text field is a good idea. But don’t throw in an open text option if you don’t intend to look at or use the data, because you’re asking for people to take time and effort to write out their thoughts. At DISQO, we aim for a maximum of eight answer options per question, though we use fewer wherever possible.

Stay tuned for the next installment of this blog where we share our standards for ensuring a positive participant experience via holistic survey design, including the unexpected ways this contributes to data quality as well the longevity of the research industry.

Ready to optimize your questionnaires? Contact us today.

Subscribe now!

Get our new reports, case studies, podcasts, articles and events